IBM Watson Holobot - The Future of the Call Center

March 2018, New York

AR, Unity, Mobile APP

Havas Worldwide

IBM Watson Holobot is an interactive augmented reality experience designed and built by Havas Worldwide.

Exhibition

The project was unveiled at the 2018 IBM Think Event.

My Contributions

Creative Programming

Visual and Animation Design

Interaction Design

Design Tools

Unity 3D

Vuforia Ground Recognition

Cinema 4D

Project Objectives

Allow users to explore the “Call Center of the Future” powered by IBM Watson through a unique augmented reality storytelling experience. The goal is to educate users how Watson artificial intelligence technologies can add value to their call centers, provide more timely customer responses, streamline processes and improve efficiency.

Ideation

IBM Watson services leverage the latest advancements in artificial intelligence and machine learning, allowing companies to gain meaningful insights from unstructured business and user data. Insights can help inform key business decisions to significantly improve operations and improve the customer experience.

The project involves creating an augmented reality application for iOS mobile and tablet devices that illustrate how Watson technologies revolutionize customer call centers. The user interacts with a virtual 3D call center building, exploring each floor. Each floor contains interactive objects, animations and video that demonstrate the benefits of leveraging IBM Watson services

storyboard

the

Last, add dynamic movement for individual sphere just on Y axis

Prototype & Project Development

During project development I was the lead creative technologist, designing and implementing visuals, motion/interaction and animation of particle systems. The particle systems were a key element in demonstrating the complexity of a call center’s day-to-day operations and how Watson services can help to improve business processes.

Particle system visual design

In the storyboard shown above, during the intro stage, there are hundreds of particles revealing and floating through the air. The design uses spheres to represent commonly asked customer service requests and questions.

Below are the sphere visuals I designed following IBM’s brand guidelines.

Sphere Visual Reference From IBM

Below are the variations of prototypes I made combining the effects of lighting, glow, shadows, reflection and transparency on spheres with different materials in the augmented reality environment. The various materials tested were matte, metallic and 2D neon sprites in order to create the visuals as close to IBM’s brand design system as possible.

After many rounds of prototype iterations and feedback from our IBM clients, we finally decided on the 2D sprite effect because it fulfills the IBM’s design requirements while maximizing rendering performance.

Specular with Glow Effect

Metallic with Transparent Effect

Sprite Effect in Blue and Grey Color

During different stages of experience, the particles are transformed to different states from unsolved, processing, to solved. Below are the tests of three different sphere visuals corresponding to the three sphere states.

Three states of sphere

Dynamic content triggering with gaze interaction

For the intro particle system, I built a gaze interaction system that highlights content based on the position of the user and helps guide them to useful information and content in the scene.

Text Fade In When Gaze At

Floor1: Particle gathering and dynamic movement

On the 1st Floor of the Call Center, our goal was to show how Watson services enables building, training and deploying of smart chatbots that can respond to customer inquiries across multiple channels including instant messaging, social media services and telephone. No matter which channel an inquiry comes from, customers will receive the same quality of customer service quickly.

I implemented two sets of particle systems to differentiate the traditional labor-intensive, inconsistent customer service and the smart chatbot solution provided by IBM Watson.

Spheres Gathering and Entering the 1st Floor

Floor2 & 3: Particle forming grid with dynamic movement

The second floor shows how Watson can answer up to 70% of incoming questions immediately. If Watson can't provide a solution right away, it can instantly search and discover answers from technical manuals, marketing materials, and frequently asked questions that improves customer satisfaction by 15%.

In order to illustrate how Watson can systematically and quickly sort through different types of customer inquiries, I created a particle system that begins with randomly placed spheres, then as time progresses they form into an ordered design based on complex mathematical calculations.

Spheres Form to Grid with Dynamic Movement

Floor4: Particles form to circle with dynamic movement

On the 4th floor, the particle system is built to demonstrate how Watson’s advanced analytics can be leveraged to discover deeper insights into customer behaviors, preferences and expectations. This allows the call center to offer better, faster and more personalized service.

I used the Polar Coordinate System to control the positioning of the spheres and programmatically form them to a circle while keeping the individual particle’s movement dynamic

Spheres Form to Circle with Dynamic Movement

User Flow & Experience

Vuforia ground recognition is used to anchor the position on a flat surface where the virtual call center is built. The user taps on the display to begin the intro and people, trees and streets start animating into the scene. A voiceover begins as hundreds of particles rise up from the ground. At this point the user is expected to follow the particle movements and interact with them.

As the intro voiceover ends, the particles fall back towards the ground to bring the user’s attention back to the base of the building. When the user gazes at the base, a virtual call center building animates in from the ground floor by floor.

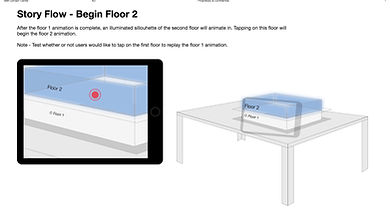

When the floor animation is complete, a silhouette of the next floor will appear. Users can tap on the next floor to continue or replay content on the current floor.